Load Balancing: Distributing Traffic for High Availability

Comprehensive guide to load balancing strategies, algorithms, and implementation. Learn how to distribute traffic across multiple servers effectively.

This article is also available in Portuguese

What is Load Balancing?

Load balancing distributes incoming network traffic across multiple servers to ensure:

- No single server is overwhelmed

- High availability (if one server fails, others take over)

- Better resource utilization

- Improved response times

Think of it like checkout lines at a supermarket - instead of one long line, you have multiple cashiers serving customers in parallel.

Load Balancing Algorithms

1. Round Robin

Distributes requests sequentially across servers.

Request 1 → Server A

Request 2 → Server B

Request 3 → Server C

Request 4 → Server A (back to start)

Pros:

- Simple to implement

- Fair distribution

- Works well when servers have similar capacity

Cons:

- Doesn’t consider server load

- Doesn’t account for server capacity differences

class RoundRobinBalancer(private val servers: List<String>) {

private var current = 0

fun getNextServer(): String {

val server = servers[current]

current = (current + 1) % servers.size

return server

}

}

2. Weighted Round Robin

Like round robin, but servers with higher weights get more requests.

data class WeightedServer(val host: String, val weight: Int)

class WeightedRoundRobinBalancer(servers: List<WeightedServer>) {

private val expandedServers = mutableListOf<String>()

private var current = 0

init {

// Expand based on weights

servers.forEach { server ->

repeat(server.weight) {

expandedServers.add(server.host)

}

}

}

fun getNextServer(): String {

val server = expandedServers[current]

current = (current + 1) % expandedServers.size

return server

}

}

// Usage

val balancer = WeightedRoundRobinBalancer(

listOf(

WeightedServer("powerful-server", 5),

WeightedServer("medium-server", 3),

WeightedServer("small-server", 1)

)

)

// powerful-server gets 5x more requests than small-server

3. Least Connections

Routes to the server with fewest active connections.

data class ServerConnection(

val host: String,

var connections: Int = 0

)

class LeastConnectionsBalancer(servers: List<String>) {

private val servers = servers.map { ServerConnection(it) }

fun getNextServer(): String {

// Find server with minimum connections

val server = servers.minByOrNull { it.connections }

?: throw IllegalStateException("No servers available")

server.connections++

return server.host

}

fun releaseConnection(host: String) {

servers.find { it.host == host }?.let {

it.connections--

}

}

}

Best for: Long-lived connections (WebSockets, database connections)

4. Least Response Time

Routes to the server with fastest response time.

data class ServerMetrics(

val host: String,

var avgResponseTime: Double = 0.0,

var requestCount: Int = 0

)

class LeastResponseTimeBalancer(servers: List<String>) {

private val servers = servers.map { ServerMetrics(it) }

fun getNextServer(): String {

// Find server with lowest average response time

val server = servers.minByOrNull { it.avgResponseTime }

?: throw IllegalStateException("No servers available")

return server.host

}

fun recordResponse(host: String, responseTime: Double) {

servers.find { it.host == host }?.let { server ->

server.avgResponseTime =

(server.avgResponseTime * server.requestCount + responseTime) /

(server.requestCount + 1)

server.requestCount++

}

}

}

5. IP Hash / Consistent Hashing

Routes based on client IP, ensuring same client always goes to same server.

class IPHashBalancer(private val servers: List<String>) {

fun getServerForIP(clientIP: String): String {

val hash = hashIP(clientIP)

val index = hash % servers.size

return servers[index]

}

private fun hashIP(ip: String): Int {

var hash = 0

ip.forEach { char ->

hash = ((hash shl 5) - hash) + char.code

hash = hash and hash // Convert to 32bit integer

}

return kotlin.math.abs(hash)

}

}

Best for: Session affinity (sticky sessions)

6. Random

Randomly selects a server. Simple but surprisingly effective.

class RandomBalancer(private val servers: List<String>) {

fun getNextServer(): String {

val index = (Math.random() * servers.size).toInt()

return servers[index]

}

}

Types of Load Balancers

Layer 4 (Transport Layer) Load Balancing

Routes based on IP address and TCP/UDP port.

Characteristics:

- Fast (just looks at network layer)

- Can’t inspect HTTP headers

- Protocol agnostic

# Nginx TCP load balancing

stream {

upstream backend {

server backend1.example.com:3000;

server backend2.example.com:3000;

server backend3.example.com:3000;

}

server {

listen 80;

proxy_pass backend;

}

}

Layer 7 (Application Layer) Load Balancing

Routes based on HTTP headers, cookies, URL path, etc.

Characteristics:

- More intelligent routing

- Can route based on content

- Slightly slower (needs to parse HTTP)

# Nginx HTTP load balancing

http {

upstream api_servers {

server api1.example.com:3000;

server api2.example.com:3000;

}

upstream static_servers {

server static1.example.com:80;

server static2.example.com:80;

}

server {

listen 80;

# Route API requests to API servers

location /api {

proxy_pass http://api_servers;

}

# Route static content to static servers

location /static {

proxy_pass http://static_servers;

}

}

}

Health Checks

Essential for high availability - only route to healthy servers.

data class HealthyServer(

val host: String,

var healthy: Boolean = true,

var failedChecks: Int = 0

)

@Component

class LoadBalancerWithHealthCheck(

servers: List<String>,

private val restTemplate: RestTemplate

) {

private val servers = servers.map { HealthyServer(it) }

init {

// Check health every 10 seconds

Executors.newScheduledThreadPool(1).scheduleAtFixedRate(

{ checkHealth() },

0,

10,

TimeUnit.SECONDS

)

}

private fun checkHealth() {

servers.forEach { server ->

try {

val response = restTemplate.getForEntity(

"http://${server.host}/health",

String::class.java

)

if (response.statusCode.is2xxSuccessful) {

server.healthy = true

server.failedChecks = 0

} else {

handleFailedCheck(server)

}

} catch (e: Exception) {

handleFailedCheck(server)

}

}

}

private fun handleFailedCheck(server: HealthyServer) {

server.failedChecks++

// Mark unhealthy after 3 consecutive failures

if (server.failedChecks >= 3) {

server.healthy = false

println("Server ${server.host} marked as unhealthy")

}

}

fun getNextServer(): String {

val healthyServers = servers.filter { it.healthy }

if (healthyServers.isEmpty()) {

throw IllegalStateException("No healthy servers available")

}

// Use round robin on healthy servers

return healthyServers.first().host

}

}

Session Persistence (Sticky Sessions)

Ensure user’s requests go to the same server.

Cookie-Based

upstream backend {

ip_hash; # Simple approach

server backend1.example.com;

server backend2.example.com;

}

# Or use cookie-based stickiness

upstream backend {

server backend1.example.com;

server backend2.example.com;

sticky cookie srv_id expires=1h;

}

Application-Level

Store sessions in shared storage:

// Instead of server memory

app.use(session({

store: new RedisStore({ client: redisClient }),

secret: 'your-secret',

resave: false,

saveUninitialized: false

}));

Load Balancer Solutions

Software Load Balancers

Nginx

upstream backend {

least_conn; # Algorithm

server backend1.example.com:3000 weight=5;

server backend2.example.com:3000 weight=3;

server backend3.example.com:3000 backup;

}

server {

listen 80;

location / {

proxy_pass http://backend;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

}

HAProxy

frontend http_front

bind *:80

default_backend servers

backend servers

balance leastconn

option httpchk GET /health

server server1 192.168.1.10:3000 check

server server2 192.168.1.11:3000 check

server server3 192.168.1.12:3000 check

Cloud Load Balancers

-

AWS ELB (Elastic Load Balancer)

- ALB (Application Load Balancer) - Layer 7

- NLB (Network Load Balancer) - Layer 4

-

Google Cloud Load Balancing

-

Azure Load Balancer

Best Practices

1. Use Health Checks

Always monitor server health and remove unhealthy servers from rotation.

2. Enable SSL Termination at Load Balancer

server {

listen 443 ssl;

ssl_certificate /path/to/cert.pem;

ssl_certificate_key /path/to/key.pem;

location / {

# Forward to backend as HTTP

proxy_pass http://backend;

}

}

3. Implement Connection Draining

Gracefully finish existing requests before removing a server.

async function drainServer(server) {

// Stop sending new requests

server.accepting = false;

// Wait for existing connections to finish

while (server.activeConnections > 0) {

await sleep(1000);

}

// Now safe to remove

removeServer(server);

}

4. Monitor Key Metrics

- Request rate per server

- Response times

- Error rates

- Active connections

- Server health status

5. Plan for Failover

Have backup servers ready to handle traffic if primary servers fail.

Common Patterns

Geographic Load Balancing

Route users to nearest data center:

US East users → us-east-1 servers

EU users → eu-west-1 servers

Asia users → ap-southeast-1 servers

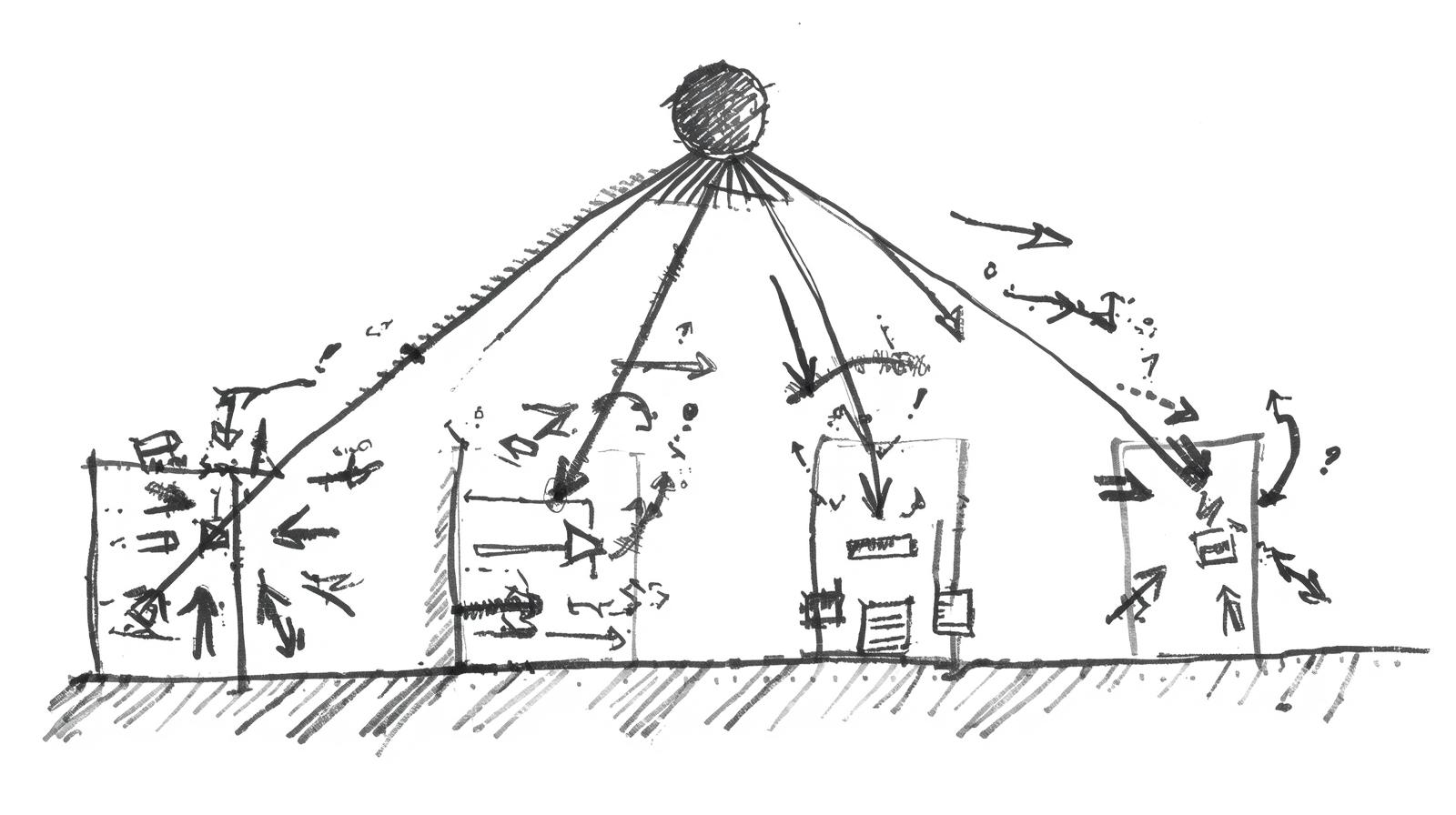

Microservices Load Balancing

Each service has its own load balancer:

API Gateway

↓

┌─────────┬─────────┬─────────┐

User LB Order LB Product LB

↓ ↓ ↓

User Svc Order Svc Product Svc

Auto-Scaling

Automatically add/remove servers based on load:

if (averageCPU > 80) {

scaleUp();

}

if (averageCPU < 20 && serverCount > minServers) {

scaleDown();

}

Conclusion

Load balancing is essential for building scalable, highly available systems. Key takeaways:

- Choose the right algorithm for your use case

- Always implement health checks

- Monitor your load balancer metrics

- Plan for failures

- Start simple and add complexity as needed

Most importantly: test your load balancing setup under realistic traffic conditions before production!

Happy balancing! ⚖️